Technical SEO Services That Fix What’s Stopping Google From Ranking You and LLMs From Recommending You

Your content is strong. Your backlinks are decent. Your keywords make sense. But rankings are stuck because your site has technical SEO issues.

We diagnose and fix the crawlability, indexation, rendering, and site architecture issues that keep your site invisible. On Google. In AI Overviews. And in LLM-generated answers, where your buyers are already searching.

Most SEO advice sounds identical.

“Optimize your meta descriptions.” “Add internal links.” “Make sure the site is mobile-friendly.”

You have done all of it. Rankings barely moved.

Google tried crawling your site last week and got stuck on 4,000 filter URLs that your navigation generated automatically. Your product pages were crawled once last month because Googlebot exhausted its crawl budget on parameter variations. That article you published last week still sits unindexed because Google has classified your domain as a thin-content publisher based on all those duplicate filter pages.

And now the problem is bigger than Google.

LLMs (ChatGPT, Gemini, Grok, Perplexity, etc.) are pulling answers from the web. But they are not pulling from just any page. They retrieve from pages that are clearly indexed, properly structured, and recognized by Google as authoritative. A site with crawl waste, indexation bloat, and architecture confusion does not get cited in AI-generated answers. It does not get recommended. It does not exist in that conversation.

Saiqic’s technical SEO services find problems hiding in server logs, rendering behavior, and indexation patterns where most technical SEO experts never look

Detailed Technical SEO Services to Find What Tools Miss

Standard audits check obvious items and call it done. We investigate why Google treats your site differently than it should.

JavaScript Rendering Diagnostics

We test what search engine crawlers and AI crawlers actually receive when they visit your site versus what a browser renders. We identify framework-level rendering failures, lazy-loading problems, and dynamic content that bots never see. Then we fix the gaps causing indexation failures and content invisibility across both Google and LLM retrieval systems.

Index Bloat Cleanup

We identify every URL variant that should not be indexed: parameter combinations, filter pages, session IDs, and duplicate content from faceted navigation. We implement containment through canonical directives, noindex rules, and URL parameter controls. Crawl budget gets redirected toward revenue-generating pages. Domain quality signals improve across both traditional search and AI retrieval.

Canonical Architecture & Redirect Chain Surgery

We audit every canonical relationship on the site for logic errors, circular references, and broken targets. We trace every redirect chain and implement direct routes that eliminate multi-hop paths. Google and LLMs both need clear, unambiguous signals about which version of a page is primary. We build that clarity into the site architecture.

Schema Markup for Rich Results

We implement structured data that does two things simultaneously. It qualifies pages for Google rich results: review snippets, FAQs, product markup, local business panels. And it builds clear entity signals that LLMs use to identify, categorize, and recommend your brand with confidence. Organization schema, author markup, product entities, and service definitions that make your brand legible to both search engines and AI systems.

Mobile-First Indexation Audit

We crawl your site as Googlebot Mobile and document every discrepancy between mobile and desktop content. Missing content, incorrectly lazy-loaded images, collapsed sections that bots never expand, and internal links are absent on mobile. We fix the mobile experience to match or exceed what was built for desktop.

Pagination and Orphaned Content Recovery

We restructure paginated content discovery so older pages remain visible to crawlers and do not get buried in the architecture. We identify orphaned pages with zero internal links, build internal link architecture that distributes crawl equity to important pages, and eliminate low-value orphans that dilute domain quality signals.

Who We Help When Rankings Drop Without a Clear Cause

We work with sites where something’s clearly broken but nobody can pinpoint what:

E-commerce Stores (product pages won’t rank despite strong metrics)

Multi-Location Businesses (city pages cannibalize each other)

SaaS Companies (feature pages disappeared after a redesign)

Content Publishers (old articles vanished despite social traffic)

Service Businesses (location + service combos create duplicate nightmares)

Migrated Sites (lost 50% of traffic with no explanation)

Enterprise Brands (subdomain structures send conflicting signals)

Do you have a unique niche and want to discuss with us?

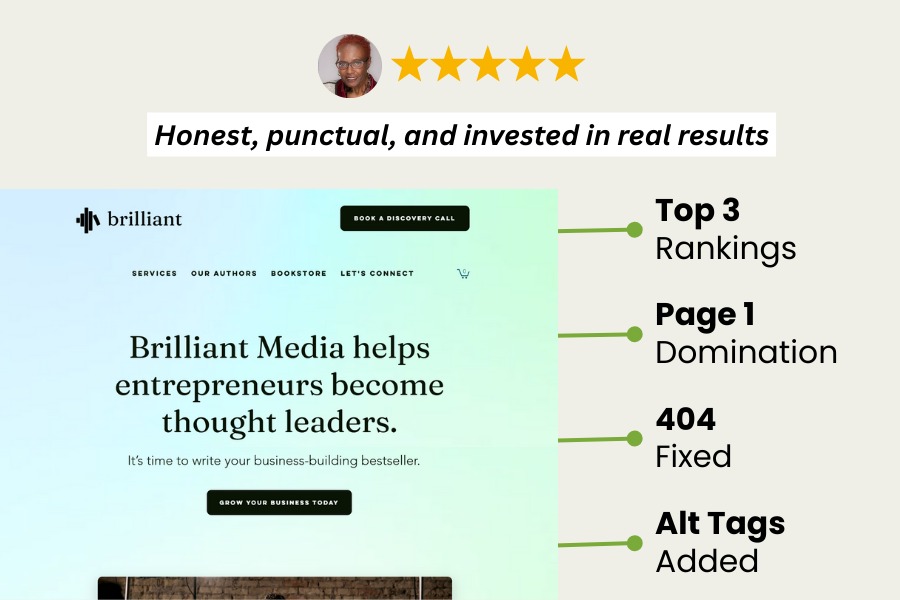

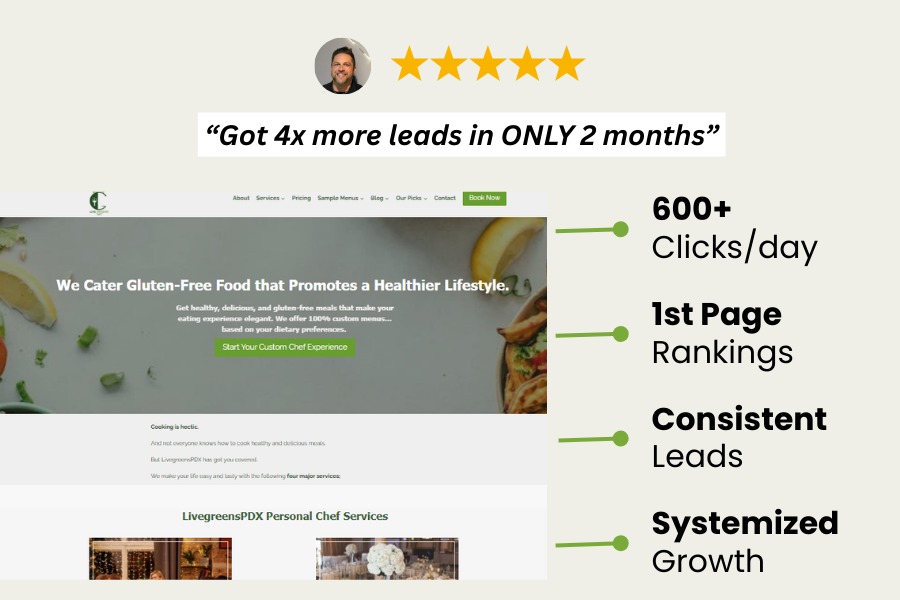

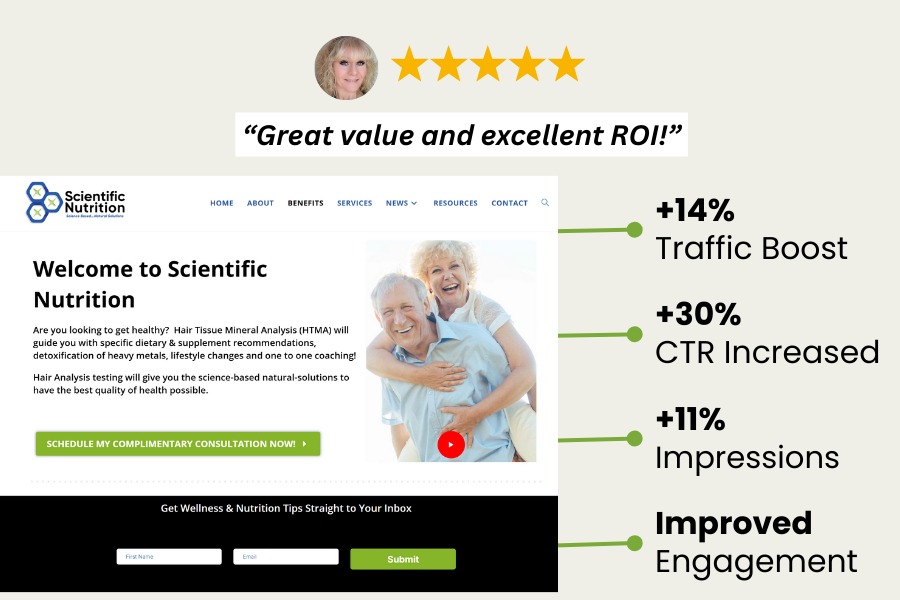

Have a look at our previous successful projects

Why Saiqic Is Your Best Choice For Technical SEO/AISEO Services

Where other agencies deliver reports only, Saiqic is the technical SEO company that delivers Recoveries. Here’s what we do differently;

We Find What Automated Tools Are Built to Miss

Standard audits run Screaming Frog, flag missing alt tags, and deliver a spreadsheet. They will not find that your CDN returns 503 errors to Googlebot IPs while working normally for users. They will not find that your hreflang implementation is correct, but every target URL redirects, causing Google to ignore the entire international setup. We dig into logs, rendering tests, and index anomalies where those tools stop.

We Diagnose the Actual Cause of Ranking Drops

Rankings collapsed four months ago. Most consultants blame an algorithm update and suggest publishing more content. We trace it to a robots.txt change that accidentally blocked the entire blog, combined with a migration that created three-hop redirect chains on 60 percent of URLs. Accurate diagnosis leads to fixes that recover traffic.

We Prioritize by Real Traffic Impact

Automated audits flag 800 missing meta descriptions as critical while ignoring that pagination created 15,000 duplicate URLs, consuming your entire crawl budget. We separate issues that are actively destroying rankings from improvements that look good in reports. You know exactly what gets fixed first and why.

We Build Technical Infrastructure for Google and LLM Visibility

Most technical SEO work focuses exclusively on traditional search signals. In 2025, that is only half the job. LLMs retrieve from indexed, authoritative, clearly structured content. The same technical foundations that improve Google crawlability and indexation also determine whether AI systems can confidently retrieve and cite your site. We build for both from the start.

We Implement. We Do Not Just Recommend.

You do not need another 50-page PDF with 300 action items and no prioritization. You need someone who fixes the problems, monitors Google’s response, and adjusts when the first approach needs refinement. We stay through implementation and verify that changes produce measurable results.

6 Technical Problems That Silently Destroy Rankings and AI Visibility Simultaneously

These problems rarely appear in standard audits. Automated tools flag missing alt tags and slow images. They do not find the issues that actually explain why a site with strong content and decent links refuses to rank.

Crawl Budget Waste Caused by Index Bloat

You think your site has 800 pages. Google has indexed 3,200 because every filter, sort option, and navigation combination generated a new URL. Google sees a mass of thin, near-duplicate content and downgrades domain trust across every page. LLMs retrieve from the same index. A domain that Google classifies as low-quality based on index bloat does not get cited in AI-generated answers either. The indexation problem affects both channels.

JavaScript Rendering Failures That Hide Content From Crawlers

Your site looks complete in a browser. Google’s crawler and LLM crawlers receive half-rendered HTML because the JavaScript framework does not render server-side correctly. Content that does not exist in the crawled version of the page cannot rank and cannot be cited. Most site owners never discover this because the browser experience looks completely normal. The problem only appears when you test what bots actually receive versus what users see.

Canonical and Redirect Logic That Google Actively Ignores

Canonical tags exist on the site. But they point to paginated versions instead of primary pages, reference URLs that return 404s, or create circular references where page A canonicals to B and B canonicals back to A. Google ignores broken canonicals and selects its own preferred version, usually not the one intended. Redirect chains from old migrations add three and four hops between URLs. LLMs retrieving content follow the same logic: a page that Google cannot confidently identify as the primary version of something will not get cited as an authoritative source.

Mobile-First Indexing Exposing Content Hidden on Mobile

Google ranks based on what mobile crawlers receive. But many sites collapse content into tabs, lazy-load images incorrectly, or hide sections on mobile that appear on desktop. The desktop version looks complete. The mobile version, which is the one being judged, is missing content, structured data, or internal links that exist only on the larger breakpoint. Rankings reflect the mobile experience, not the desktop one that was actually optimized.

Schema Markup That Validates but Does Not Qualify

The site has schema markup. Google’s Rich Results Test shows no errors. But the implementation is functionally useless: Article schema applied to product pages, LocalBusiness markup duplicated across 47 locations with identical phone numbers, review markup referencing ratings that do not exist on the page. A technically valid schema that does not reflect the actual content of the page does not qualify for rich results and does not generate the structured entity signals that LLMs use to identify and recommend brands with confidence.

International and Multi-Location Structures That Compete Instead of Cooperate

Hreflang tags are correctly implemented. But all three regional versions target the same keywords and appear in the same search results simultaneously rather than routing by location. For multi-location service businesses, city pages use near-identical content and cannibalize each other. Google cannot determine which version to surface for which searcher. LLMs attempting to recommend a business for a specific location face the same ambiguity and default to more clearly structured competitors.

Saiqic Diagnostic Process That Turns Guesswork Into Measurable Fixes

1. Diagnostic Investigation

We crawl your site, analyze 90 days of logs, test rendering, examine index coverage, and map how bots interact with pages. You get a diagnosis explaining why traffic isn’t where it should be.

2. Prioritized Fix Strategy

We separate critical issues actively hurting rankings from nice optimizations that won’t move numbers. You’ll know what delivers ROI and what’s busywork.

3. Direct Implementation

We implement fixes, test in staging, verify nothing breaks, and monitor Google’s response. If we can’t access your backend, we provide exact code your team can execute.

4. Impact Measurement & Adjustment

Every fix gets tracked against rankings, traffic, and crawl behavior. If something doesn’t produce expected results, we adjust. It’s to diagnose, fix, measure, refine.

5. Continuous Monitoring

We set up monitoring that catches issues fast: indexation drops, crawl errors, rendering failures. You won’t discover problems three months later.

Your Content Strategy Can’t Overcome Broken Technical Foundation

Publishing more won’t help if Google isn’t crawling what you have. Building backlinks won’t matter if pages aren’t indexed correctly or buried in architecture.

Saiqic’s advanced technical SEO services fix technical problems that make Google reluctant to rank you. We eliminate what wastes resources, restructure what confuses crawlers, and optimize what slows everything down.

You Might Have These Questions…

Most audits check shallow metrics with automated tools. They find alt tags and slow images but miss log issues, rendering problems, indexation bloat, and crawl waste. Passing an audit doesn’t mean Google crawls you efficiently. It means you don’t have obvious errors a tool can detect.

Most agencies focus on content and links because technical SEO requires specialized expertise. They’ll optimize meta tags but won’t analyze server logs, test rendering, or investigate indexation patterns. If your agency hasn’t shown you crawl budget analysis or index diagnostics, they’re doing surface work.

Platform limitations exist, but most technical issues come from configuration, not platform constraints. We’ve optimized sites on every major CMS and know how to improve crawl efficiency, fix indexation, and optimize rendering within whatever you’re using.

Book a call if you’re still confused and unsure what value Saiqic can offer you.